STORY OF THE MONTH

Working Together for a Better Future

Apr 2026

Apr 2026  Fabrizio Sisinni

Fabrizio Sisinni

Chapter 1: A Whisper from the Future

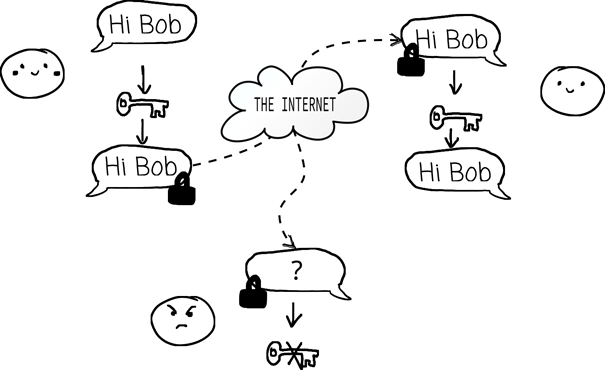

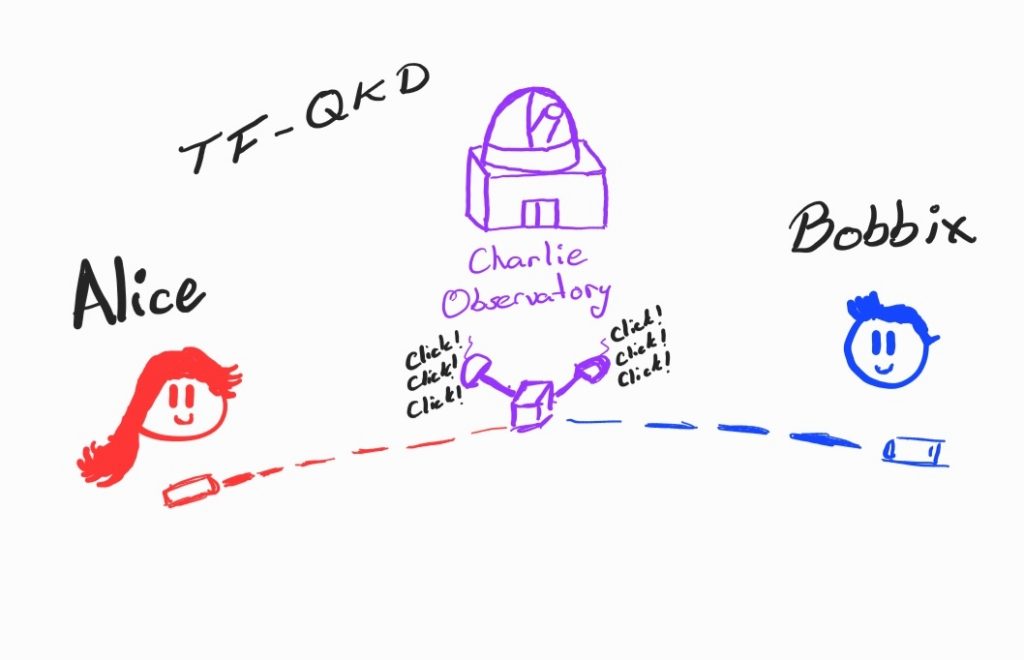

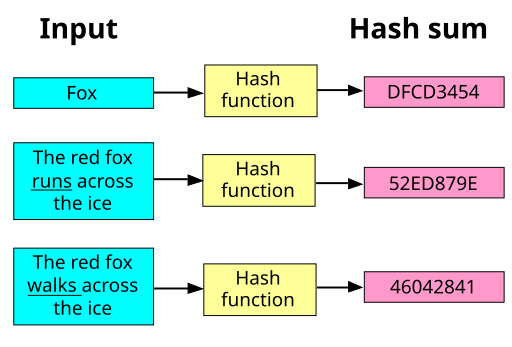

For decades, the world’s secrets traveled quietly through invisible tunnels. Every time someone sent a message, checked a bank account, updated a medical record, or unlocked a phone, a silent ritual took place. Tiny mathematical puzzles protected everything. These puzzles were so cleverly designed that even the fastest computers on Earth would need thousands of years to solve them without the proper key. People trusted these puzzles. The digital world was built upon them Then, one day, a whisper began to circulate through the scientific community.

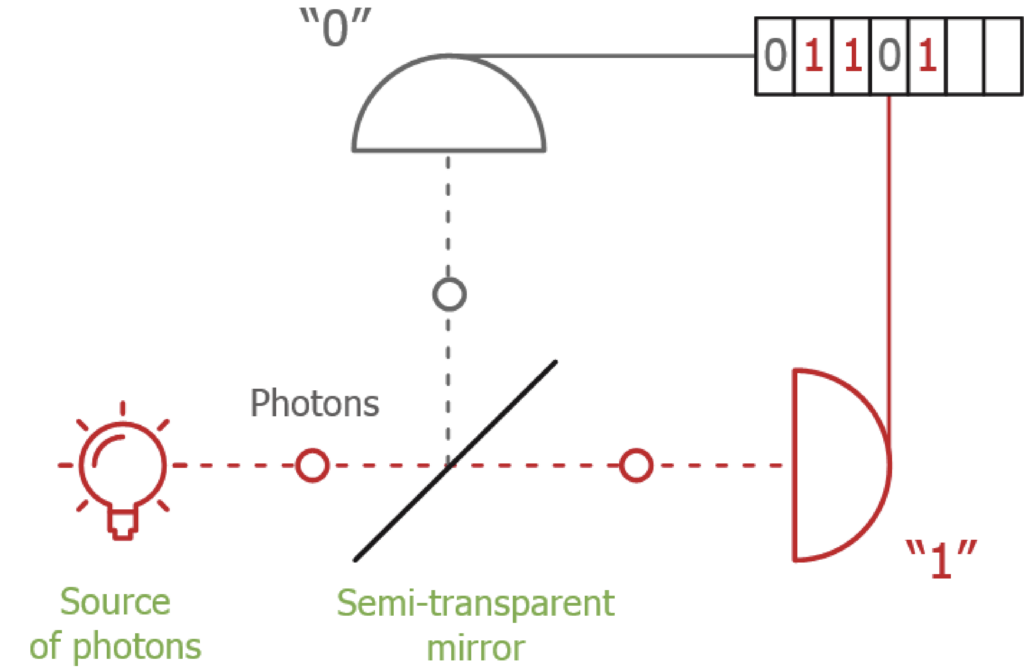

Quantum computers.

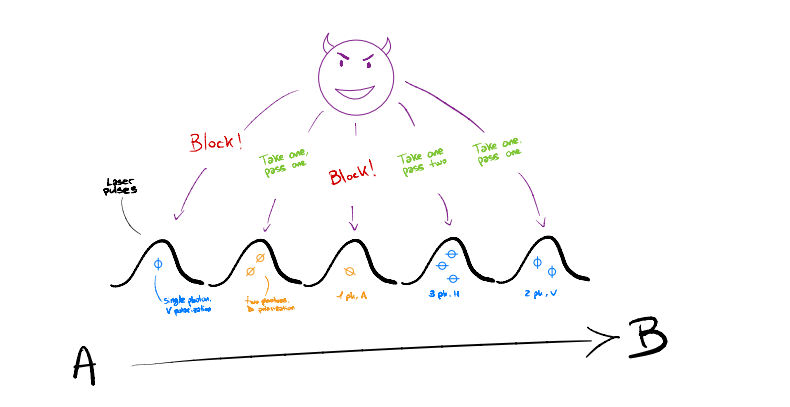

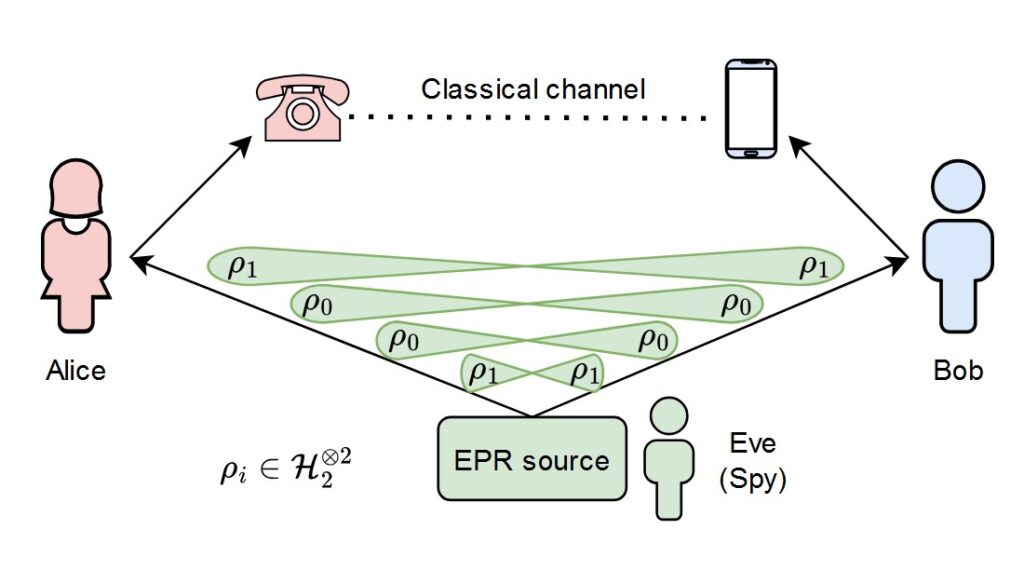

Not the kind of machines people kept on their desks. These were strange, almost magical devices, built on the laws of quantum physics. Instead of thinking in bits, zeros and ones, they thought in probabilities, superpositions, and entanglement. And for certain mathematical problems, they promised something extraordinary: speed beyond imagination.

The whisper soon turned into a clear realization.

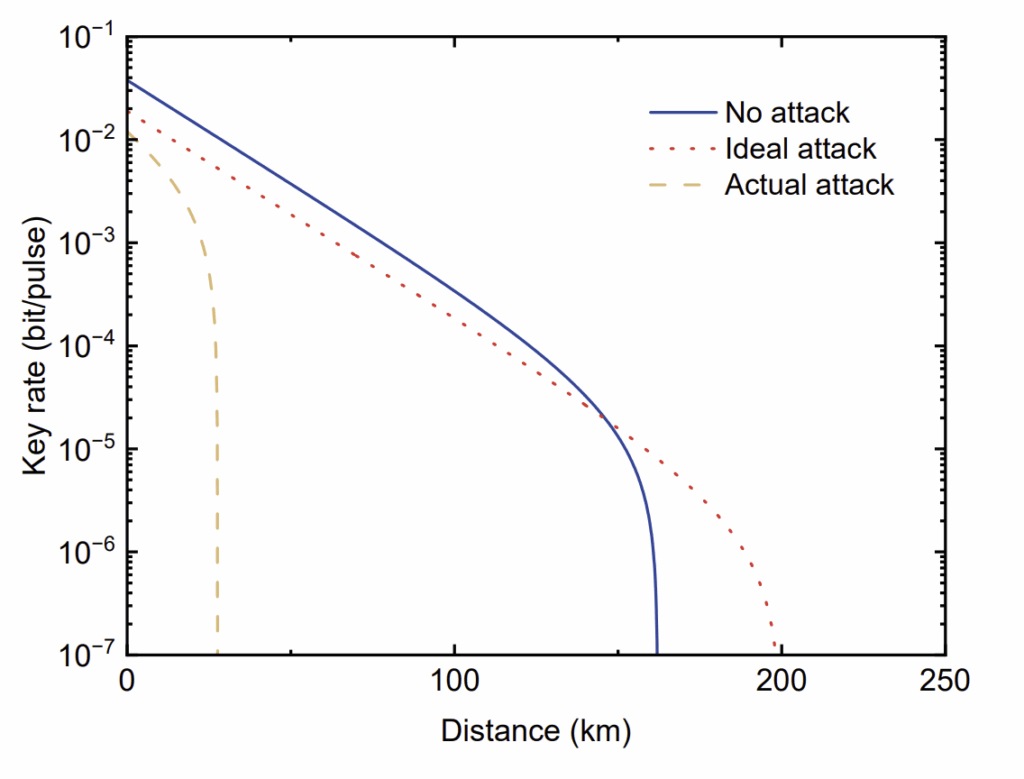

If large-scale quantum computers became real, many of the puzzles protecting the world’s secrets would no longer be safe. Problems that would take classical computers centuries could, in theory, be solved in hours or even minutes. The locks on the world’s digital doors might suddenly become fragile.

It wasn’t an immediate disaster. Quantum computers powerful enough to break today’s cryptography did not yet exist. But they were no longer science fiction. They were a research goal, steadily pursued in laboratories around the globe.

The threat was not today. It was tomorrow. And tomorrow was coming.

Chapter 2: A Call to Action

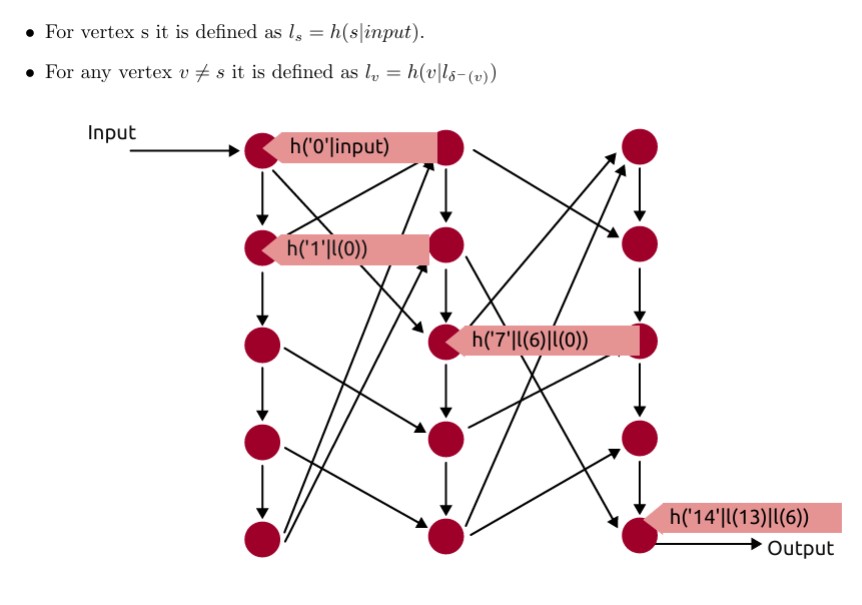

When the warning became impossible to ignore, a call went out, not to a single lab, not to a single country, but to a global network of researchers who had been quietly thinking about this problem for years. In truth, the work had started long before the alarm bells rang. Some mathematicians had been studying quantum-resistant cryptography decades earlier, motivated not by fear but by curiosity. They explored strange mathematical structures, high-dimensional lattices, error-correcting codes, and intricate systems of equations, wondering whether these could form the basis of future cryptographic protocols.

When the prospect of powerful quantum computers became more tangible, those ideas moved from the margins to the center. The call was clear:

We must prepare new cryptographic protocols that can survive in a quantum world.

And so proposals began to flow in.

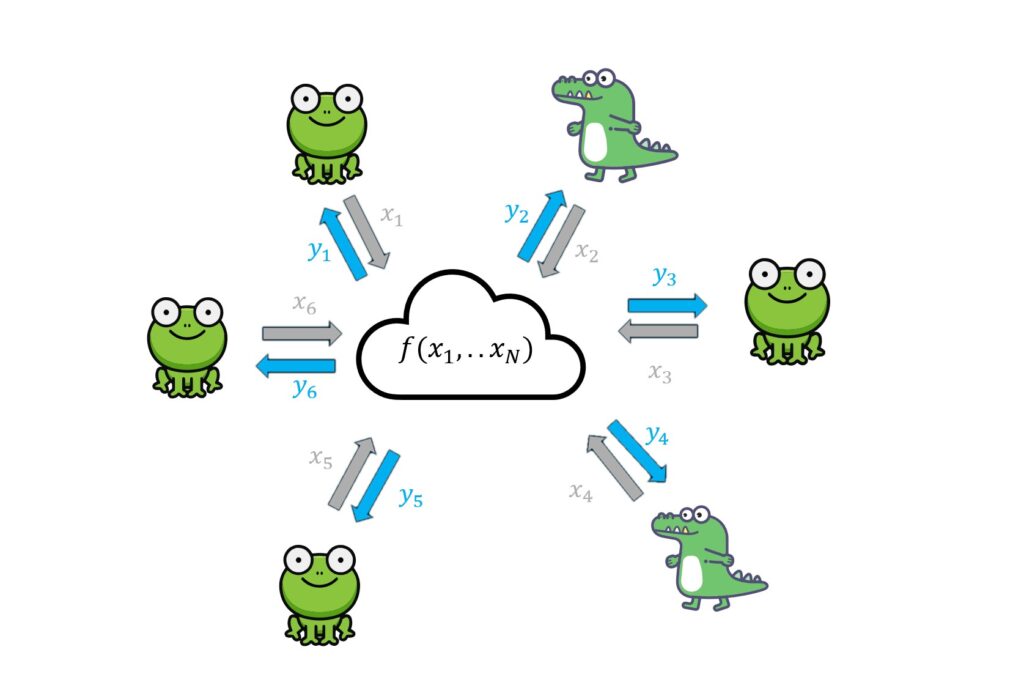

Teams formed across universities, research institutes, startups, and established technology companies. Some groups were large and well-funded. Others were small collaborations held together by shared passion and late-night video calls across time zones. Each team believed their mathematical approach offered the strongest foundation for secure communication in the decades to come.

The atmosphere was energetic, but far from peaceful.

Researchers debated which mathematical assumptions were truly trustworthy. Was one problem really harder than another? Could a clever quantum algorithm undermine an entire family of designs? Some argued for conservative choices, favoring constructions that seemed mathematically robust even if they were less efficient. Others pushed for faster, more practical solutions that would be easier to deploy at global scale.

Papers were published openly. Code was shared. Presentations were given at conferences packed with skeptical audiences. Questions were sharp. Criticism was direct. No proposal was accepted on reputation alone.

And yet, beneath the disagreements, there was a shared understanding: this was not a race for prestige alone. The stakes were too high. Modern life, financial systems, healthcare infrastructure, private communication, national security, relied on cryptographic protocols that might one day become vulnerable.

The community did not agree on everything. In fact, they agreed on very little at first. But they agreed on this: the problem was real, and it required collective effort. So they built. They argued. They revised. They improved.

The call had been answered, not with a single voice, but with a chorus of competing ideas, all striving to shape the future of secure communication.

Chapter 3: The Trial by Fire

If proposing new cryptographic protocols required creativity, what followed required something else entirely: suspicion.

Every design that had been proudly introduced to the world now faced its true test. The same community that had applauded elegant ideas turned into a room full of critics. Their task was simple in principle and ruthless in practice:

Assume it is flawed. Now prove it.

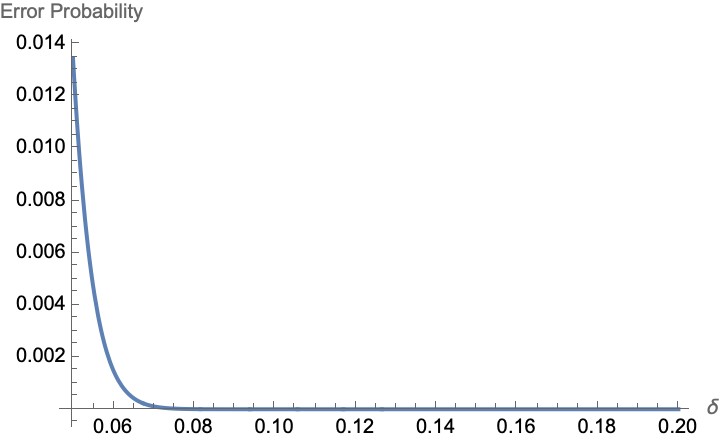

The evaluation process unfolded in public rounds. Protocols were measured not only by their theoretical security, but also by their efficiency, their flexibility, and their practicality in real-world systems. Could they run on small devices with limited memory? Could they be integrated into existing internet standards without breaking everything? Could they be implemented safely without opening the door to subtle side-channel attacks?

But the heart of the process was cryptanalysis, the art of breaking things.

Researchers dissected each proposal line by line. They examined the mathematical assumptions behind them. They searched for shortcuts, hidden symmetries, unexpected patterns. Some attacks were dramatic, collapsing entire schemes in a single paper. Others were quieter but equally devastating: a parameter slightly too small, a proof relying on a fragile assumption, a corner case that no one had noticed before.

There were tense moments.

Teams whose designs had seemed promising saw them dismantled in public discussions. Years of work could be undone by a clever insight from a graduate student on another continent. Debates grew heated at conferences and workshops. Was a break truly practical, or merely theoretical? Should a weakened protocol be repaired or discarded? How much margin of safety was enough?

Not all disagreements were purely scientific. Questions of performance and deployment surfaced again and again. A protocol that was extremely conservative might be slower and harder to adopt. A more efficient one might rely on assumptions that some researchers considered less mature. Choosing between them was not a matter of elegance alone, it required judgment.

And yet, something remarkable happened.

When a protocol was broken, its designers did not disappear. Often, they joined the effort to test the remaining candidates. The goal was not to protect one’s own creation, but to ensure that whatever survived could endure not just today’s scrutiny, but decades of future advances, including advances no one could yet predict.

Over time, the field narrowed

The protocols that remained standing had not survived because they were untouched. They had survived because they had been attacked repeatedly and had adapted. Parameters were strengthened. Proofs were clarified. Implementations were hardened.

What emerged from this process was not certainty, cryptography rarely offers that, but confidence earned through relentless doubt.

The community had not sought perfection.

It had sought resilience.

And resilience is forged only under pressure.

Chapter 4: A promise of Quantum-Safe-Internet

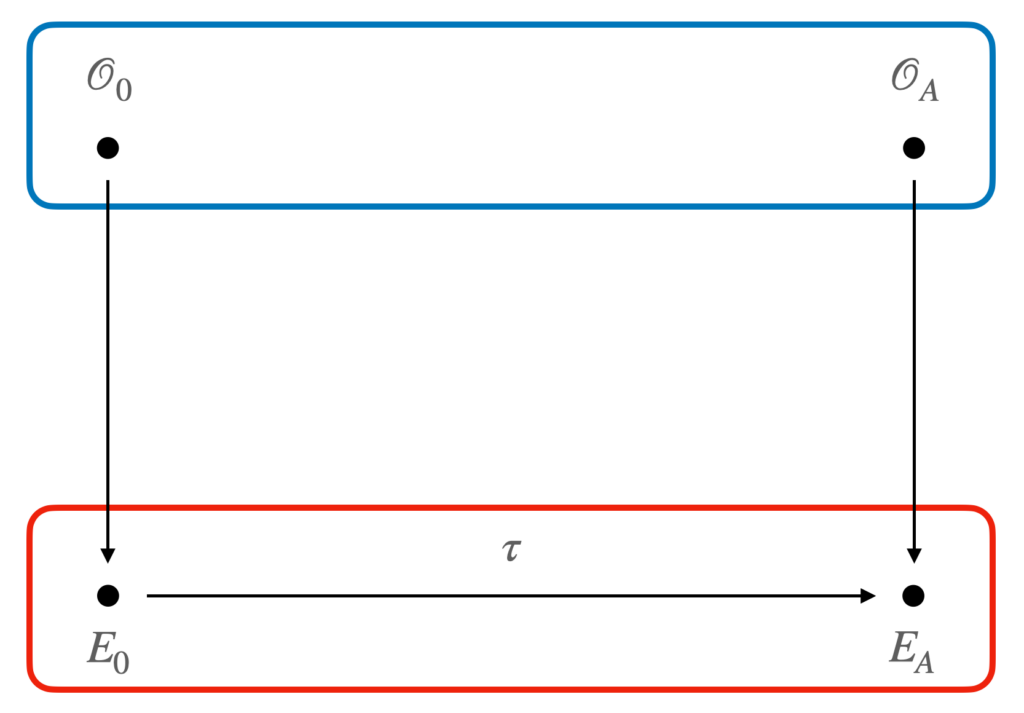

At last, after years of proposals, debates, and relentless testing, a Key Encapsulation Mechanism was chosen to become a standard. The decision belonged, in spirit, to the entire global community that had built and tested the candidates.

It might be tempting to see that moment as the finish line. In truth, it was only a milestone.

A standard is a carefully written document. It defines algorithms, parameters, test vectors, and security requirements. It does not automatically update the billions of devices already deployed. It does not rewrite legacy software. It does not persuade companies to invest in costly migrations. It does not resolve geopolitical tensions about whose technology should be trusted.

The announcement was followed not by universal celebration, but by questions.

Some companies hesitated. Updating cryptographic infrastructure is expensive and risky. Systems that secure financial transactions, industrial control networks, or medical records cannot simply be replaced overnight. Compatibility with existing protocols must be preserved. Performance must be measured. Certifications must be renewed. For organizations operating at global scale, even small changes ripple outward in unpredictable ways.

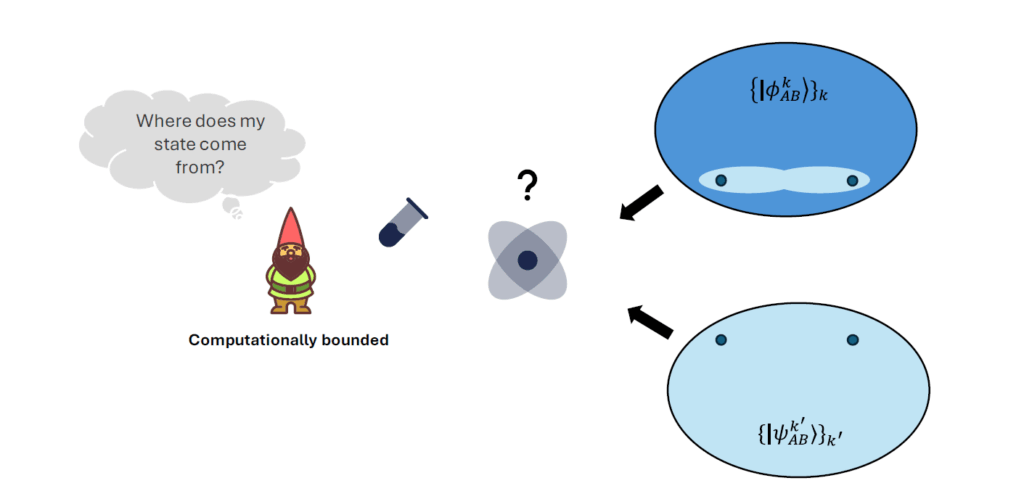

Others raised technical concerns. Would these new mathematical assumptions remain solid for decades? What if future research revealed unexpected weaknesses? Is it wiser to migrate immediately, or to wait and observe?

And beneath it all lay a quieter problem: knowledge.

The new protocols were the product of years of specialized research. But the engineers responsible for deploying them were not necessarily cryptographers. They needed clear documentation, reference implementations, security guidelines, and migration strategies. They needed to understand not just what to implement, but why certain design choices had been made, and what could go wrong if they were altered.

So the role of the scientific community shifted once again.

Researchers became educators and translators. They wrote implementation guides and explanatory papers aimed at practitioners rather than theorists. They collaborated with standards bodies and industry groups. They contributed to open-source libraries to lower the barrier to adoption. They organized workshops to train engineers, auditors, and policymakers. They also listened.

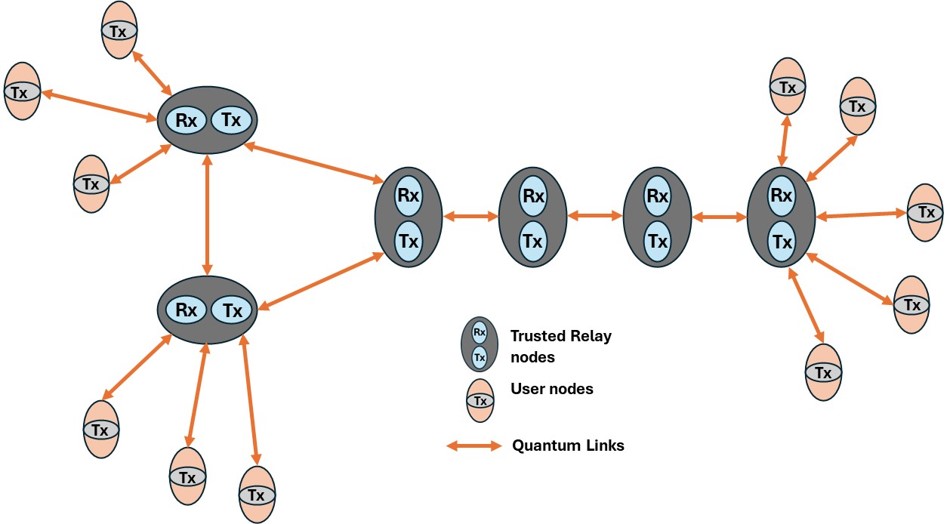

Concerns about cost, performance, and interoperability were not dismissed as resistance. They were treated as engineering realities. The vision of a quantum-safe internet could not be achieved by mathematical correctness alone; it required coordination across sectors, countries, and generations of technology.

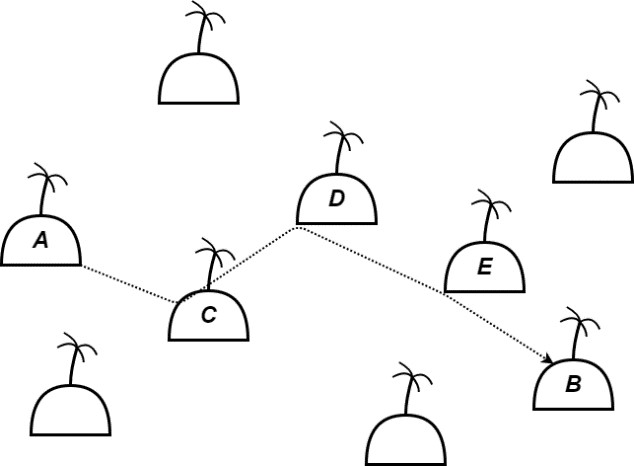

The transition would not happen in a single update. It would unfold gradually: hybrid deployments combining classical and quantum-resistant protocols, pilot projects in critical infrastructure, cautious rollouts in consumer software. Some systems, satellites, embedded devices, long-lived hardware, would remain vulnerable for years simply because replacing them was impractical.

And throughout this transition, uncertainty remained. Standardized does not mean unbreakable. The new protocols would continue to be analyzed, challenged, and tested by future researchers. Vigilance would not end with publication; it would become permanent.

The story, then, does not conclude with a triumphant solution. It continues in classrooms where new engineers learn these protocols. In companies debating migration timelines. In research labs still probing for weaknesses. In policy discussions about global coordination.

Standardization marked the moment when preparation became official.

The real work, building a Quantum Safe Internet, began the day after.

OTHER STORIES