STORY OF THE MONTH

Beyond Einstein: Bell Tests and the Limits of Local Hidden Variables.

Nov 2025

Nov 2025  Matías R. Bolaños Wagner

Matías R. Bolaños Wagner

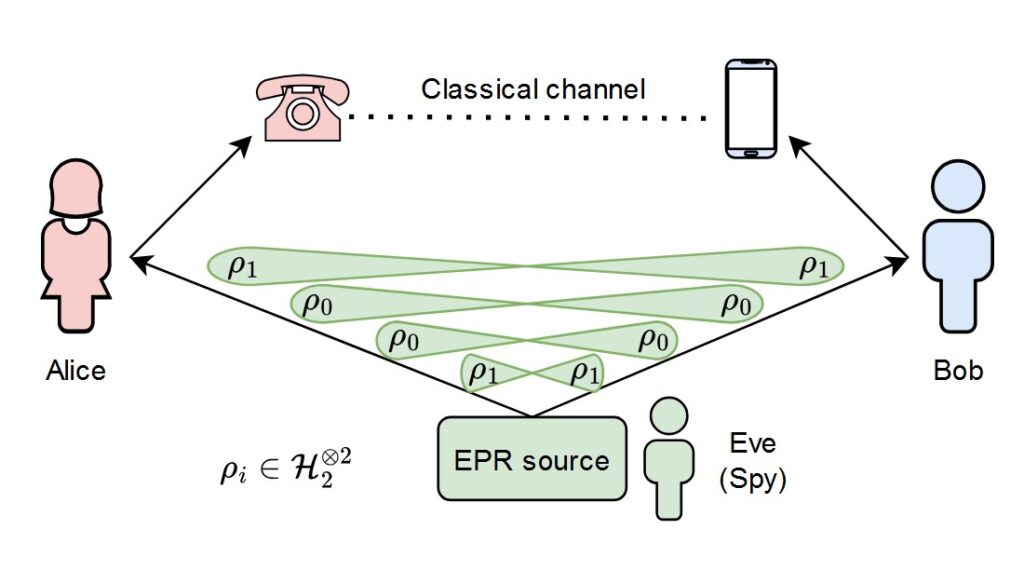

In 1935, Albert Einstein, Boris Podolsky, and Nathan Rosen published a paper that would haunt physics for nearly a century. They argued that if Quantum Mechanics were ‘complete,’ it would imply a ‘spooky action at a distance’ that violated the very structure of space and time. To save local realism, they proposed that particles must carry ‘hidden variables’, a secret internal blueprint that determines measurement outcomes long before Alice or Bob ever look at their detectors.

For decades, this was dismissed as ‘philosophy.’ That changed in 1964 when John Bell turned philosophy into a testable inequality.

Breaking local realism: The CHSH Inequality

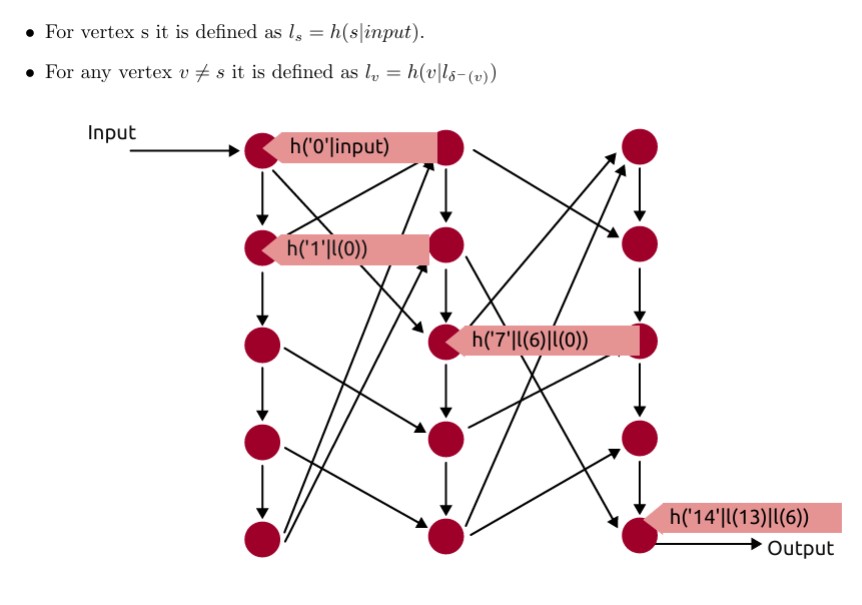

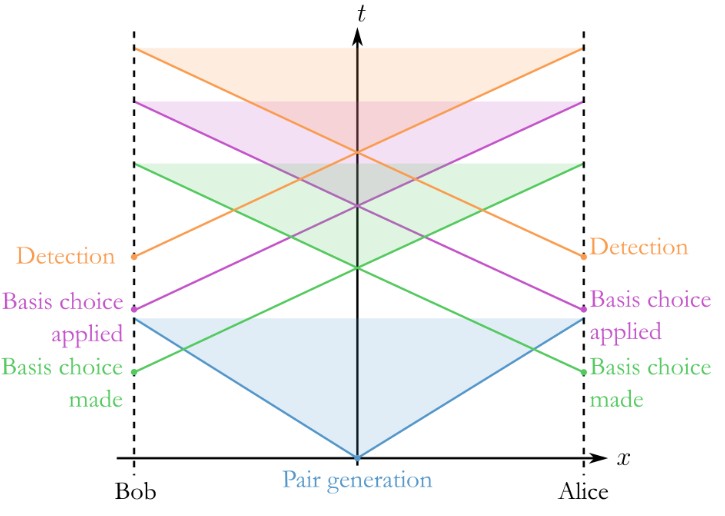

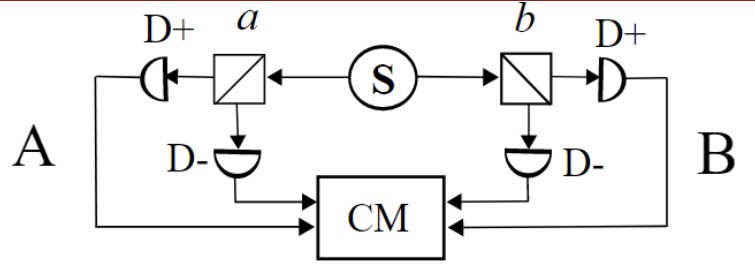

To understand a loophole, one must first understand the ‘fence’ we are trying to build. In a standard Bell test (specifically the CHSH version), we measure the correlation between two distant particles. If the world follows Einstein’s local hidden variables, the correlation value S must obey:

Where E(a, b) represents the expectation value of measurements taken at settings a and b. However, Quantum Mechanics predicts that for entangled states, S can reach:

Whenever we measure a value significantly greater than 2, we have ‘violated’ Bell’s inequality.

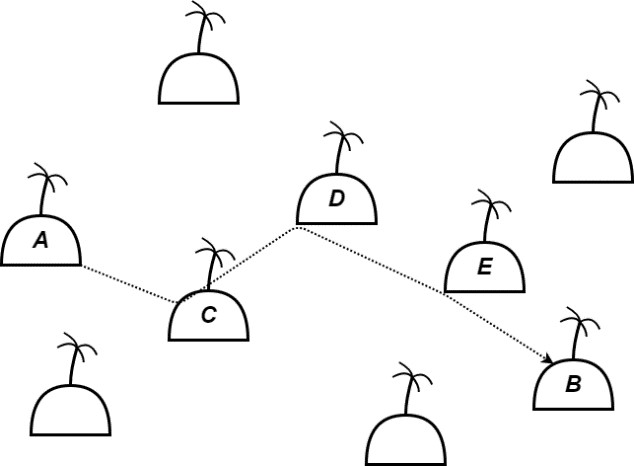

1. The Locality Loophole: Is the action truly nonlocal?

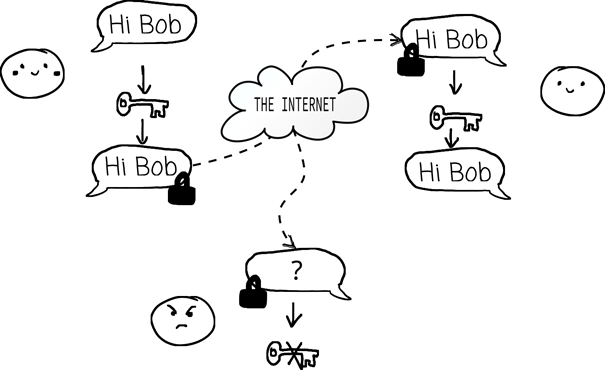

The most intuitive loophole is communication. If Alice’s measurement setting choice can reach Bob’s particle before it is measured, the system is no longer ‘local.’ A signal could coordinate the results to ‘fake’ a Bell violation.

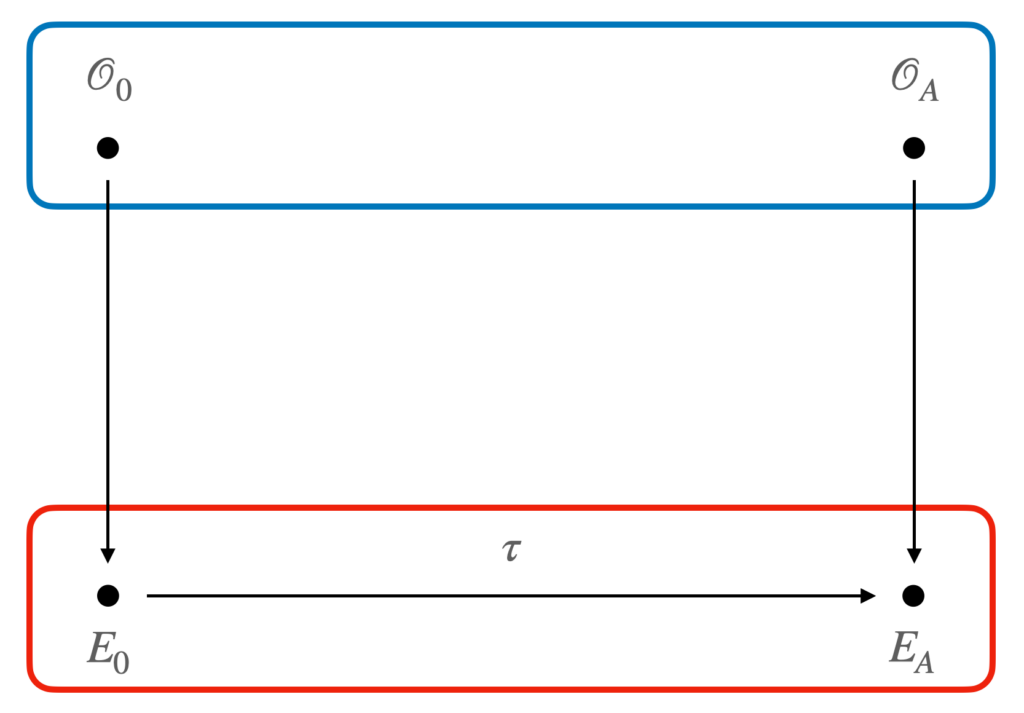

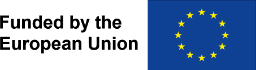

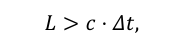

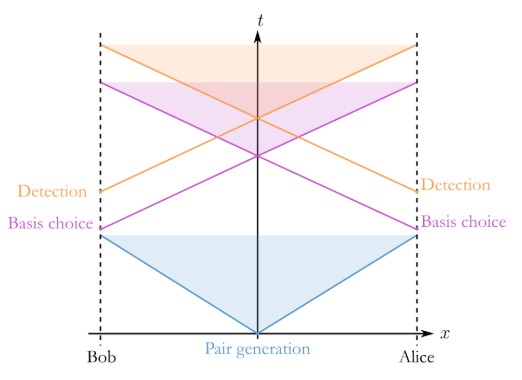

To close this, we must enforce “Space-like Separation”. If L is the distance between Alice and Bob, and Δt is the time it takes to perform the measurement, we require:

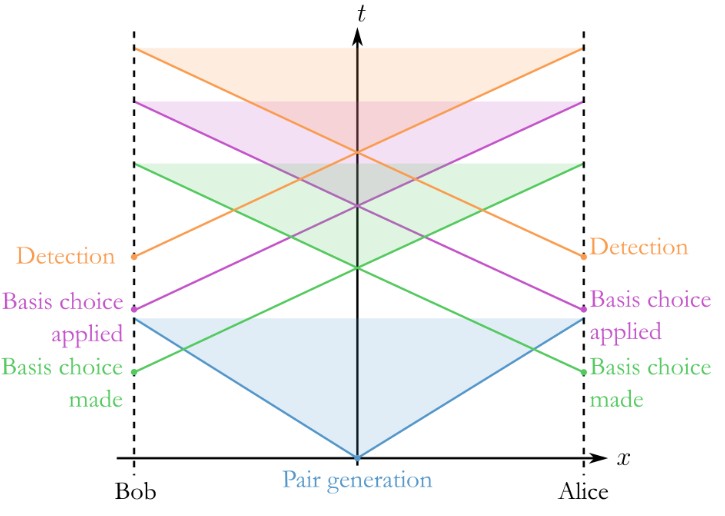

Which can be easily displayed on a space-time diagram, or Minkowski diagram, by tracing what is known as the “light cone” of an event, particularly Alice’s (or Bob’s) measurement setting choice. If the detection of Bob falls outside the light cone of Alice, and viceversa, we say these events are space-like separated.

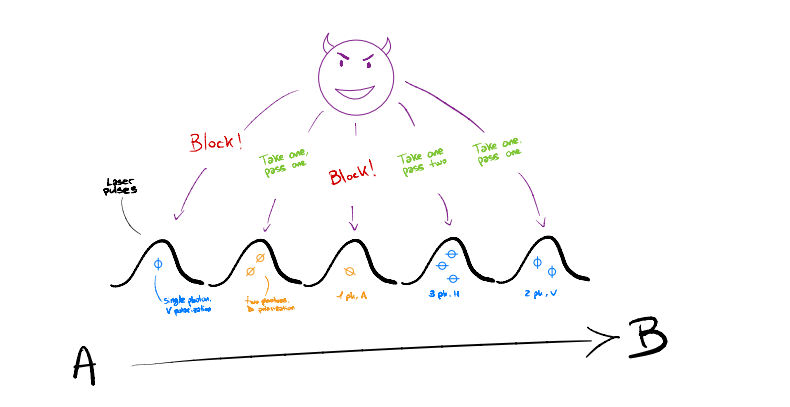

2. The Detection Loophole: The ‘Fair Sampling’ Trap

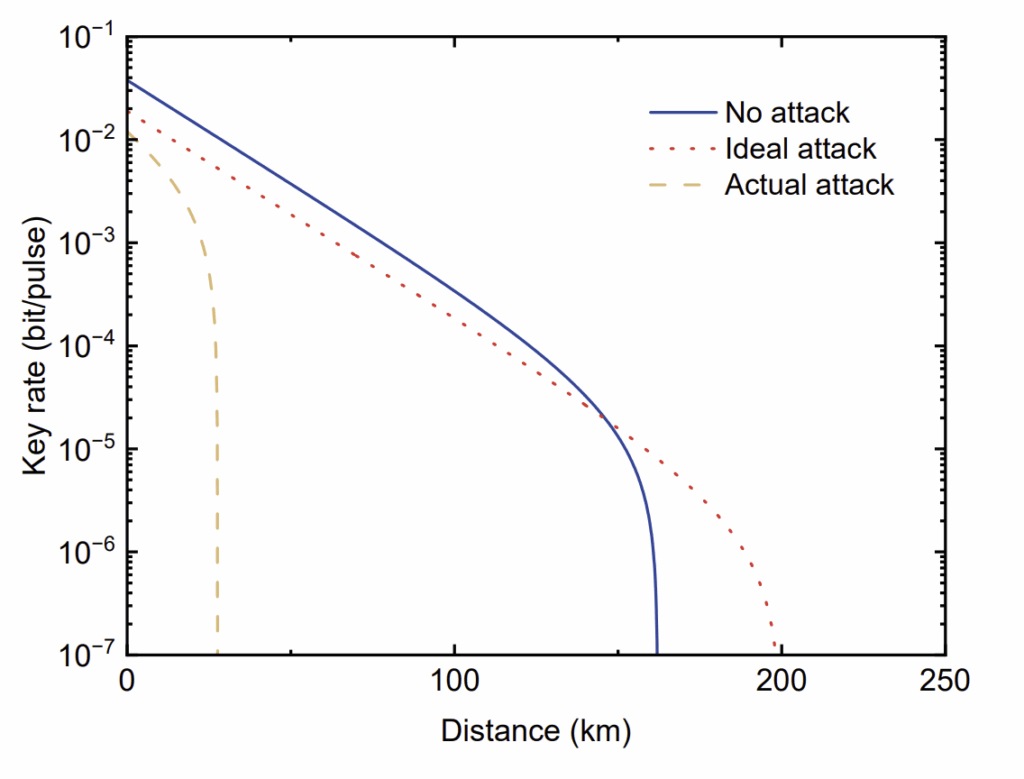

In any real-world experiment, detectors aren’t perfect. We lose photons in fibers, lenses, and sensors. The Detection Loophole suggests that the universe might be ‘cherry-picking’ which photons we see. If we detect only a small fraction, those pairs might show a violation, while the missed ones would have restored the S ≤ 2 limit.

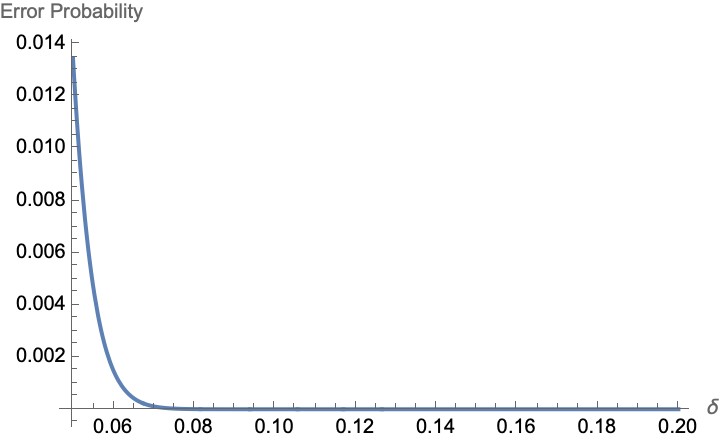

To mathematically close this loophole, the total detection efficiency η must exceed a critical threshold:

Nowadays, closing this loophole proves particularly hard, as just propagation losses could be enough to fall below the critical threshold.

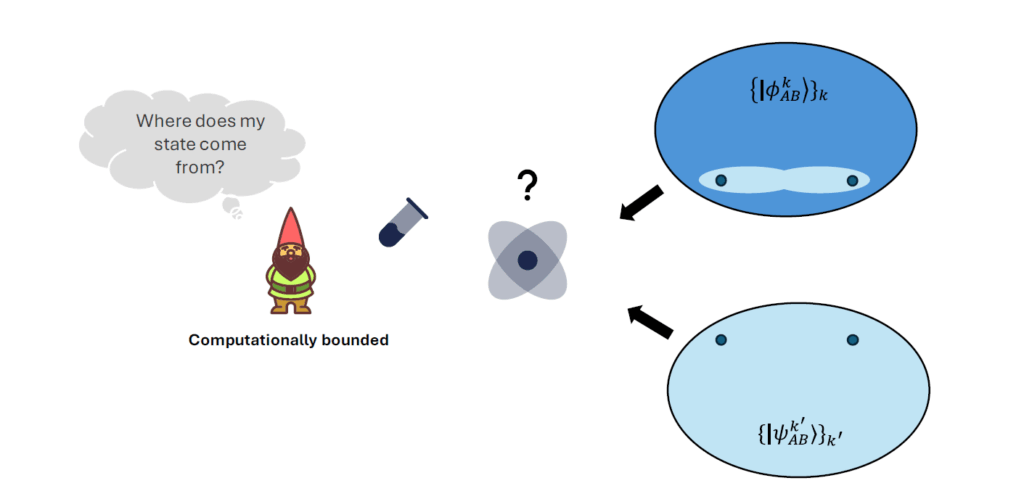

3. The Freedom-of-Choice Loophole: Do the experimentalists have free will?

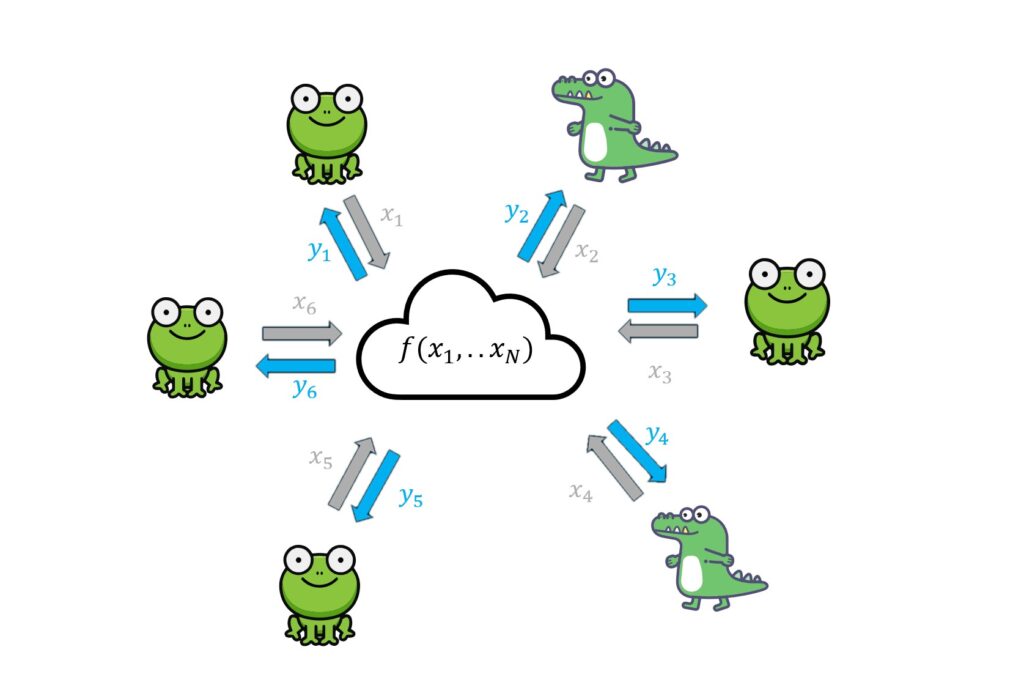

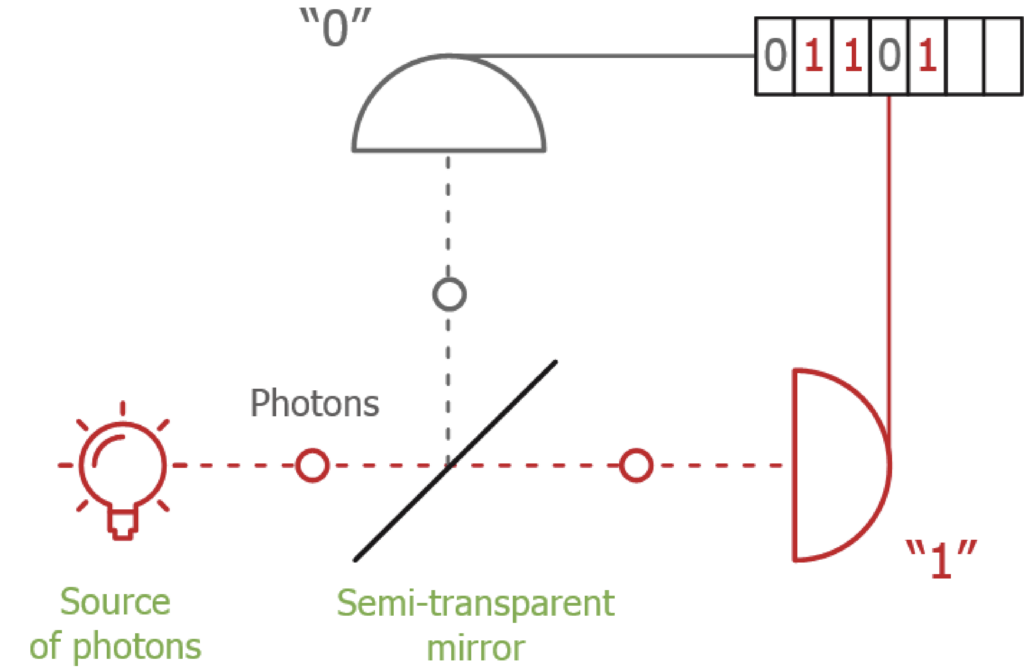

The Freedom-of-Choice loophole is perhaps the most philosophical, and particularly annoying, of the three. It attacks the assumption that Alice and Bob have total agency over their experimental settings, challenging the free will of the experimentalists in question. In a standard Bell test, we assume that the choice of measurement settings (a or b) is independent of the properties of the entangled particles. If a hidden variable could influence both the particles and the mechanism that chooses the settings, the resulting correlations could mimic quantum entanglement, even in a purely classical world.To mitigate this, experimentalists use high-speed Quantum Random Number Generators (QRNGs). The goal is to ensure that the setting is chosen so rapidly that no signal (limited by the speed of light) could have coordinated the choice with the particle’s “hidden” state.

Is free will even real?

If we take the Freedom-of-Choice loophole to its logical extreme, we encounter Superdeterminism. This is a fringe but mathematically consistent theory which suggests that the universe is essentially “on rails.”

In a superdeterministic universe, everything, from the Big Bang to the specific random number generated in your lab today, is part of a pre-determined chain of events. If the universe is superdeterministic, there is no such thing as a “free” choice or a truly independent random variable. Every “random” setting Alice chooses was actually determined billions of years ago, potentially in perfect correlation with the particles being measured.

While most physicists find superdeterminism unpalatable because it negates the very concept of experimental science (the ability to perform independent interventions), it remains the ultimate “un-closable” loophole. If the world is superdeterministic, then Bell’s Theorem doesn’t prove “spookiness”, it simply reflects a cosmic script that we are all following.

By using light from distant quasars to determine measurement settings (as seen in recent “Cosmic Bell” experiments), researchers have pushed the potential origin of such a “conspiracy” back billions of years, narrowing the window for anything other than a fully pre-destined universe to explain away the results.

Since superdeterminism is at the end of the day an unprovable theory, we normally choose to take the oldest event that could influence the particles to be the generation of the particles themselves. With this in mind, the condition translates to the measurement setting choice to be space-like separated from the pair generation itself.

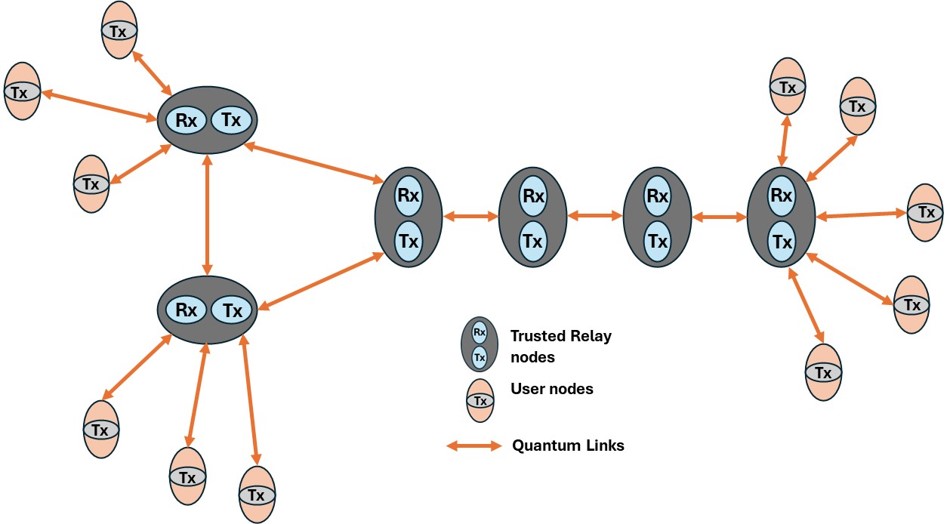

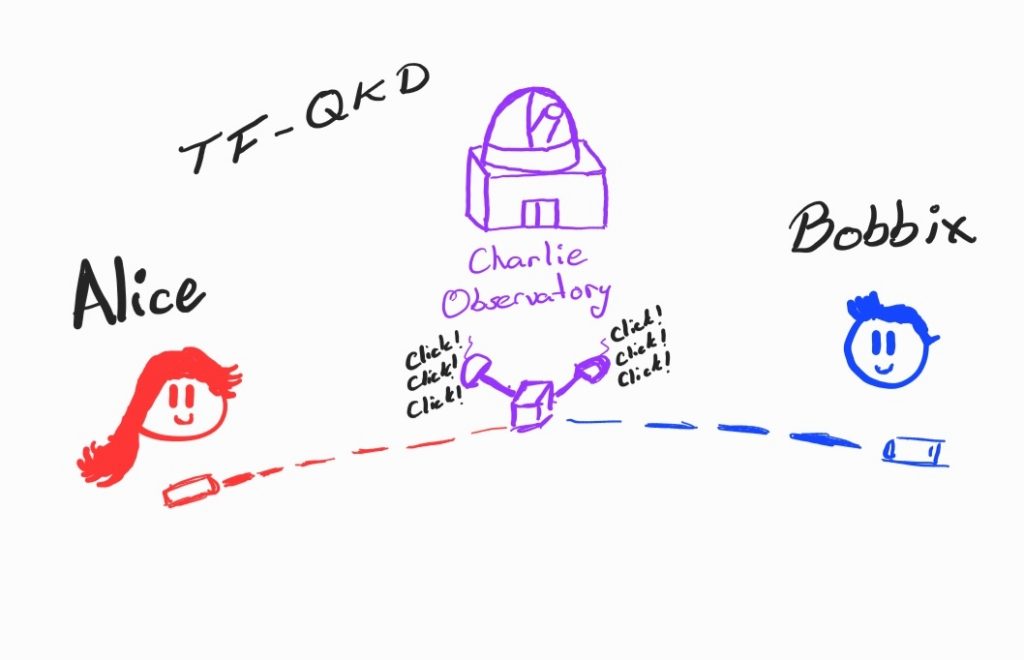

Why It Matters: The ‘Device-Independent’ Shield

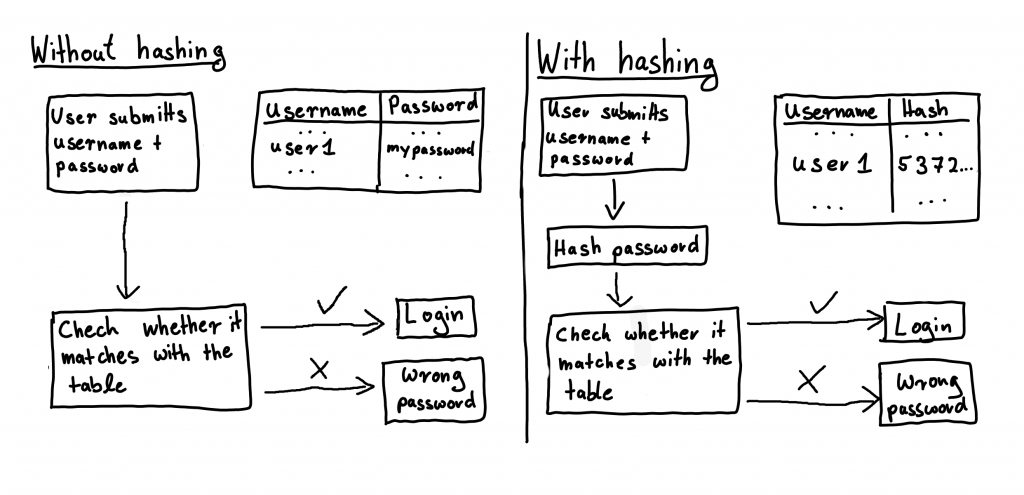

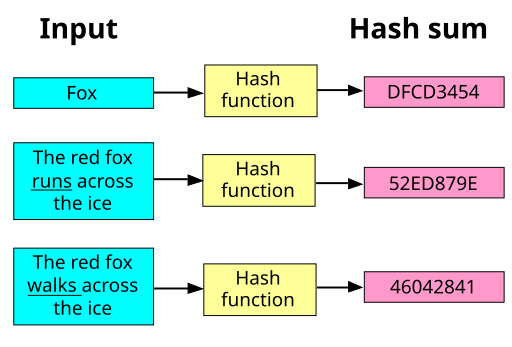

Closing these loopholes isn’t just about winning an old argument. It is about trust. In Device-Independent QKD (DI-QKD), the Bell violation itself is the proof of security. If you see S > 2 in a loophole-free environment, you are mathematically guaranteed that no eavesdropper exists, regardless of whether you trust your hardware.

By closing these experimental loopholes, we are effectively getting one step closer to building the most secure communication network in human history.

OTHER STORIES